Think of end-to-end testing as a full dress rehearsal for your software, but from your customer's point of view. It's about simulating a real user's journey from start to finish to make sure every single part of your application works together perfectly.

We're not just checking isolated buttons or bits of code. Instead, we're testing the entire workflow—from the user interface they click on, through the APIs, into the database, and even interacting with third-party services. This all-encompassing approach ensures the whole system delivers on its promise.

What End-to-End Testing Really Means for Your Business

Picture a customer landing on your website. They browse a few products, add one to their cart, head to the checkout, punch in their payment info, and get that satisfying order confirmation email. End-to-end (E2E) testing is what automates that entire customer story to ensure it’s a smooth, successful one.

This is what makes it so different. It’s not concerned with whether a single component is the right colour or functions in a vacuum. It answers the one question every founder and product manager loses sleep over: "Does our app actually work for our users?" It steps away from the code and looks at the big picture.

The Ultimate Confidence Check Before Launch

For any SaaS company or startup, E2E testing is that final, critical confidence check before you go live. It’s all about validating the business-critical workflows that directly affect your revenue and your users' trust. A broken checkout flow isn't a minor bug; it's a customer you just lost.

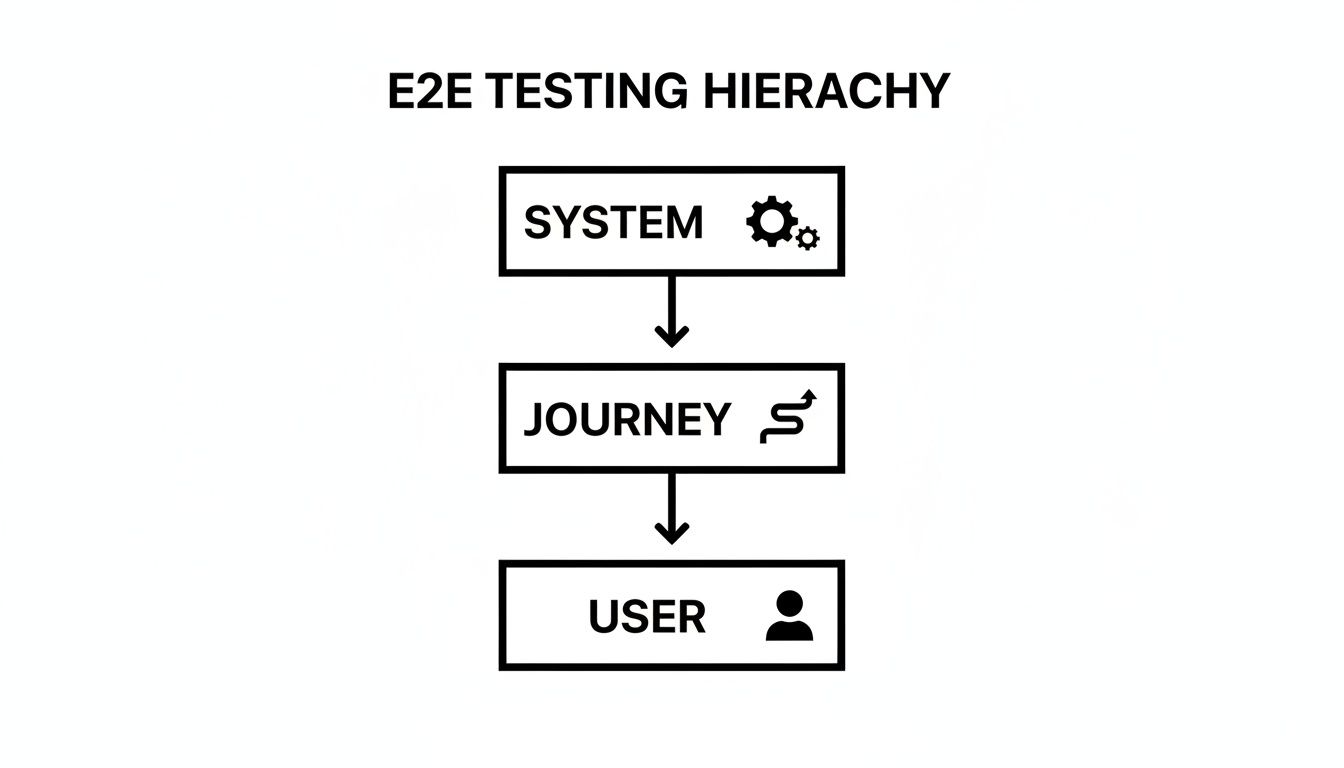

This is where E2E testing shines, as it’s designed to sniff out these high-impact problems by verifying:

- System Integrity: It makes sure data flows correctly across all the different layers of your application, from the frontend all the way to the backend and any external services you rely on.

- User Experience: It confirms that the most important user journeys are clear and functional, preventing those frustrating dead ends or confusing errors that make people leave.

- Business Logic: It validates that your application follows your core business rules, like properly applying a discount code or processing a new subscription.

Why It Matters in a Growing Market

In Australia's fiercely competitive software scene, getting reliable products out the door fast is a massive advantage. End-to-end testing has become a non-negotiable for startups and small SaaS teams who want to release new features without the nightmare of brittle, high-maintenance test scripts.

In fact, recent industry analysis shows the Australian software testing services market is set to grow by a huge USD 1.7 billion between 2024 and 2029, with an impressive compound annual growth rate of 12.3%. You can dive deeper into these market trends over at Technavio.com. This tells us just how seriously businesses are taking quality assurance.

An end-to-end test doesn't care about the neatness of your code or its internal structure. It only cares about what the user sees and experiences. That perspective is crucial for making sure your engineering effort is actually creating business value.

At the end of the day, by mimicking how real people use your software, E2E testing protects your revenue and helps you build a reputation for being reliable. It's your last line of defence, ensuring the product you ship doesn't just work on a technical level, but truly delivers the experience your customers expect.

Understanding the Software Testing Pyramid

To really get a feel for where end-to-end testing shines, it helps to see it as part of a bigger picture. The best engineering teams I've worked with all use a model called the Software Testing Pyramid to shape their strategy. It’s a simple but powerful framework for balancing different types of tests to create a quality assurance process that’s solid, efficient, and doesn't become a nightmare to maintain.

Think of it like building a house. You'd never start by checking if the front door key works before you've even poured the foundation. That would be absurd. You build from the ground up, making sure every single brick is solid before you start putting walls together. Software testing works on the exact same principle.

This diagram shows how end-to-end testing sits right at the top, focusing on the complete user journey that relies on all the underlying systems.

What this really highlights is that a user’s experience is the final product of every single system component doing its job correctly, all at the same time.

The Foundation: Unit Tests

At the very bottom of the pyramid—the wide, sturdy base—you’ll find unit tests. These are small, incredibly fast tests that check the individual "bricks" of your application, like a single function or method, completely on its own.

A classic example of a unit test would be verifying that a function designed to calculate a discount correctly returns 10% off the original price. Nothing more, nothing less.

Because they're so quick and cheap to run, you should have heaps of them. They form the bedrock of your testing strategy, catching bugs at the earliest possible moment before they can grow into much bigger, more complex problems.

The Middle Layer: Integration Tests

As we move up the pyramid, we hit integration tests. This is where we start checking that different parts of the application can actually talk to each other. Sticking with the house analogy, this is like making sure the plumbing connects properly to the mains and the electrical wiring between rooms doesn't short-circuit.

A typical integration test might check that when a user's details are saved through the interface, the application's user service can successfully communicate with the database and store the information. These tests are a bit slower and more involved than unit tests, which is why you'll have fewer of them.

The Peak: End-to-End Tests

Right at the top, the final piece of the puzzle, is end-to-end (E2E) testing. This is the equivalent of doing a final walkthrough of your fully built and furnished house. It simulates a real user's journey from start to finish, confirming that every component—the user interface, backend services, databases, and even third-party integrations—all play nicely together.

An end-to-end test is the only type of test that can tell you with certainty if a critical user workflow, like completing a purchase, is actually working. It validates the business process, not just the technical implementation.

Because these tests are the most complex and by far the slowest to run, you should have the fewest of them. They are reserved for your most critical business flows—the stuff that absolutely must work. A common mistake teams make is inverting the pyramid and relying too heavily on E2E tests. This always leads to a testing process that is brittle, slow, and incredibly expensive to maintain.

To see how these different layers of testing contribute to the overall goal of verifying application behaviour, you can learn more about functional testing in our detailed guide.

To bring it all together, here’s a quick comparison of the three layers to help you understand what to reach for and when.

Unit vs Integration vs End-to-End Testing

| Attribute | Unit Testing | Integration Testing | End-to-End Testing |

|---|---|---|---|

| Scope | A single function or component. | Interaction between 2+ modules. | The entire application workflow. |

| Speed | Milliseconds. | Seconds. | Minutes. |

| Cost | Very low to write and run. | Moderate. | High to create and maintain. |

| Example | Does the password validation function reject short passwords? | Can the API fetch user data from the database? | Can a user sign up, log in, and create a new project? |

This table clearly shows the trade-offs. While E2E tests give you the most confidence, they come at the highest cost. A healthy testing strategy relies on a strong foundation of unit tests, a sensible number of integration tests, and a small, carefully chosen suite of E2E tests for mission-critical paths.

How to Design Test Scenarios That Matter

Effective end-to-end testing isn't about chasing 100% coverage. Honestly, that’s a surefire way to build a slow, bloated, and expensive test suite. The real art is gaining maximum confidence with minimum effort. This means laser-focusing your attention on the user journeys that are absolutely critical to your business.

You need to think like your users. What are the core pathways in your application that simply must work, no matter what? These are the workflows that, if they break, will hit your revenue, shatter user trust, or gut your core value proposition.

This selective approach is everything. By prioritising these high-value scenarios, you're building a lean but powerful safety net. It's designed to catch the most dangerous bugs without gumming up your development cycle.

Identify Your Critical User Journeys

Before you even think about writing a line of test code, you have to figure out what to test. A great place to start is by mapping out the "happy paths"—the ideal sequences of actions a user takes to get something done. These are your non-negotiables.

For a typical SaaS application, these critical journeys often look something like this:

- New User Onboarding: Can someone sign up, verify their email, and complete the first-time setup? If this fails, you've lost a customer before they’ve even started.

- Core Feature Engagement: Can a user log in and actually use the main thing your software does? Think creating a new project, sending an invoice, or publishing a post.

- Subscription and Billing: Can a customer buy a plan, upgrade or downgrade, and get through the checkout? This is literally where the money comes from.

- Password Reset: Can a locked-out user get back into their account easily? This is a huge user retention and support-deflection workflow.

By concentrating on these flows, you ensure your end-to-end testing efforts are directly tied to business outcomes. Every passing test is tangible proof that your application can deliver value and generate revenue.

Write Scenarios in Plain English

Once you've pinpointed the critical journeys, the next step is to describe them so that everyone on the team—from developers to product managers to QA specialists—can understand them. Technical jargon creates silos; plain English builds bridges.

A simple yet incredibly effective technique for this is the Given-When-Then format. It structures your scenarios like a short story, making your tests readable and completely unambiguous. It’s a cornerstone of behaviour-driven development for a reason.

The Given-When-Then formula helps you define a test case by clearly stating the precondition (Given), the action being tested (When), and the expected outcome (Then). It transforms a complex user interaction into a simple, logical statement.

Let's translate a critical journey into this format.

Scenario Example: New User Subscription

- Given a new user has landed on the pricing page and is not logged in.

- When they select the 'Pro' plan, enter their registration details, provide valid payment information, and confirm the subscription.

- Then they should see a "Welcome!" confirmation page, be logged into their new account, and receive a welcome email with their subscription details.

This structure is brilliant. It’s instantly clear what the test is supposed to do and what a "successful" result looks like. There's no room for misinterpretation.

Turn Scenarios into Actionable Test Cases

With your scenarios laid out in plain English, turning them into actionable test cases becomes much more straightforward. Each part of the "Given-When-Then" formula maps directly to the steps your automated test will perform.

Let's break down our subscription scenario into the concrete steps a test would actually execute:

Given (The Setup):

- Navigate to the

/pricingpage. - Assert that no user session cookie exists.

- Navigate to the

When (The Actions):

- Click the button for the 'Pro' plan.

- Fill the

namefield with "Jane Doe". - Fill the

emailfield with a unique test email address. - Fill the

passwordfield with a valid password. - Submit the registration form.

- Fill the payment form with test credit card details.

- Click the "Complete Purchase" button.

Then (The Verification):

- Assert that the current URL is

/welcome. - Assert that an element containing "Welcome, Jane Doe!" is visible on the page.

- Check for a user session cookie.

- (Optional, more advanced) Use an API to check that a welcome email was sent to the test address.

- Assert that the current URL is

This detailed breakdown gives you a clear blueprint for automation. It ensures your end-to-end testing isn't just a series of random clicks but a methodical process of verifying the outcomes that truly matter to your business.

Common Pitfalls and How to Avoid Them

Jumping into end-to-end testing can sometimes feel like a game of snakes and ladders. You make great progress, then one wrong move sends you sliding back into a mess of slow, flaky, and unmaintainable tests. So many teams dive in with the best intentions, only to find their test suites are causing more headaches than they solve.

Knowing the common traps from the get-go is the secret to building a robust automation framework that actually works. Think of this section as a collection of hard-won lessons from the field. Sidestep these pitfalls, and you’ll build a safety net that saves your engineers from a world of pain debugging random, frustrating failures.

Brittle and Unreliable Selectors

One of the most common mistakes is anchoring tests to the fragile, nuts-and-bolts details of the UI. When you write a test that says, "find the third div inside the second div," you're setting yourself up for failure. That test will break the second a developer adds a new wrapper or tweaks the page layout. This is what we call using brittle selectors.

These selectors are the silent killers of test suites. For fast-moving startups, this brittleness is a massive productivity drain. In fact, some studies show traditional test setups can fail in 40% of automated runs simply because of minor UI changes, forcing developers to waste 20-30% of their time on fixes. This is especially relevant in the Australian market, where verifying complete user workflows is a major focus, as highlighted in recent software testing service trends in Australia.

Solution: Focus on what the user sees. Instead of getting tangled up in complex CSS or XPath, tell your tests to find elements the way a person would: by their button text, their accessibility label, or a purpose-built

data-testidattribute. This approach decouples your tests from the code's internal structure, making them incredibly resilient to UI redesigns.

Unstable and Inconsistent Test Data

Another huge source of flaky tests is a chaotic test data environment. If your tests depend on a shared database that other developers or automated jobs are constantly messing with, you can't guarantee a clean slate. A test that’s supposed to delete a specific user will obviously fail if that user isn’t there when the test kicks off.

This creates "Heisenbugs"—those maddening failures that seem to appear and vanish at random, making them almost impossible to pin down. The test code itself might be flawless, but it's running in an unpredictable environment.

To fix this, you need to take full control of your test environment. Here are a few solid strategies:

- Seed Data Before Each Run: Before a test even starts, use a script to create the exact data it needs. For example, make an API call to create a fresh user account rather than hoping an old one still exists.

- Isolate Test Environments: Run your tests against a dedicated database or server that is completely separate from your main development or staging environments. This ensures no one else can trample on your data.

- Clean Up After Each Test: Good test hygiene is crucial. Make sure every test cleans up after itself, deleting any data it created, so the system is left in the same state it was found in.

By carefully managing your test data and environment, you remove a massive variable from the equation. This makes your end-to-end testing suite far more reliable and, most importantly, trustworthy.

How AI Is Revolutionising E2E Testing

Ask any seasoned developer about end-to-end testing, and you'll likely hear a groan. The biggest headache has always been the immense maintenance burden. A developer changes a button's ID or refactors a small component, and suddenly a dozen tests shatter. This pulls your best engineers off feature development and throws them into a frustrating game of "fix the tests."

This constant fragility is the number one reason teams give up on their E2E ambitions. For fast-moving teams where the UI is constantly evolving, the effort to keep a coded test suite healthy often feels like it outweighs the benefits. It’s a vicious cycle of writing, breaking, fixing, and repeating.

Shifting from “How” to “What”

The real solution to this problem is a fundamental shift in how we think about creating tests, and it's being driven by modern AI. Instead of writing rigid, step-by-step scripts telling a machine how to perform an action, we can now just state what we want to achieve in plain English.

Let’s break that down. A traditional, brittle script says something like:

- Find the element with

id="btn-submit-form". - Click that specific element.

- Wait for the element with

class="success-message"to appear.

An AI-powered approach, on the other hand, understands your intent: “Submit the form and verify a success message appears.”

This distinction is massive. The AI doesn't care if a developer changes the button's ID or if the success message now lives in a different div. It behaves more like a human tester, using context to find the right elements and confirm the outcome. This intelligent adaptation makes tests incredibly resilient to the small UI changes that constantly break old-school scripts.

The Arrival of Self-Healing Tests

This AI-driven approach introduces the concept of self-healing tests. When an AI agent runs into a change—like a button being relabelled from “Sign Up” to “Create Account”—it doesn't just fail. It uses its understanding of the application to find the new element, complete the test, and often flag the change for a human to review later.

This isn't just a minor improvement; it's a game-changer for test maintenance. By adapting to UI changes on the fly, AI-powered tools slash the time your team spends fixing broken tests, freeing them up to focus on building your product.

This capability is turning end-to-end testing from a costly engineering chore into a simple, accessible process. Now, anyone on the team, from product managers to manual QA testers, can create and maintain robust tests without writing a single line of code.

This new wave of AI-powered tools makes reliable testing achievable for teams of any size. If you want to see how an AI agent can execute test scenarios directly from plain-English descriptions, you can learn more about the modern approach with tools like e2eAgent.io. The shift means you spend less time wrestling with brittle scripts and more time shipping features with confidence.

Frequently Asked Questions About E2E Testing

Even with a solid plan, it’s completely normal for teams to have a few lingering questions when they first dive into end-to-end testing. Getting clear answers to these common queries is the best way to move forward with confidence and sidestep those early pitfalls.

Let's walk through some of the most common questions we hear from teams just starting out.

How Many End-to-End Tests Should We Have?

This is probably the number one question, and the answer is always the same: focus on quality over quantity. There’s no magic number. It's tempting to chase a high test count, but that's a classic rookie mistake.

A much better approach is to start small by covering your top 3-5 most critical user journeys. Think about the absolute must-work parts of your application:

- New user sign-up and the first-run experience.

- The entire purchase and checkout process.

- Using that one core feature that delivers the most value to your customers.

A tight, reliable suite of high-impact tests is infinitely more valuable than a pile of slow, flaky tests that everyone on the team learns to ignore.

Can End-to-End Testing Replace Manual QA?

Nope. It’s a powerful partner to manual QA, but it’s definitely not a replacement. Think of them as two different tools for two very different jobs.

Automated end-to-end testing is fantastic for repeatedly checking that your most important, established workflows haven't broken. It’s your safety net, catching regressions before they cause chaos.

Manual QA, on the other hand, is all about human intuition. It's essential for exploratory testing, judging usability, and getting a real feel for brand-new features where context and gut feeling are everything. You need both working together for a truly solid quality strategy.

E2E automation is your guard against breaking what already works. Manual testing is your tool for discovering new and unexpected problems. You're vulnerable without both.

How Often Should We Run E2E Tests?

Ideally, your E2E suite should run automatically within your CI/CD pipeline right before any code is deployed to production. This acts as a final quality gate, stopping regressions from ever making it out the door.

But let's be realistic—these tests can be a bit slow. Because of this, many teams use a hybrid model. They might run a small set of "smoke tests" on every single code commit, then save the full, long-running E2E suite for a nightly build in a proper staging environment. This gives you regular feedback without bogging down the entire development process.

You can discover more testing strategies and best practices in our other articles on the e2eAgent.io blog.

What Is the Difference Between E2E and Integration Testing?

This is a really common point of confusion.

Integration tests are all about checking if the different pieces of your system can talk to each other correctly. They usually operate at the API level, without a real user interface. For example: "Can the user service successfully request data from the order service?" It’s an internal check-up.

End-to-end testing zooms all the way out. It simulates a complete user journey, starting from the outside-in by interacting with the UI in a real browser. It validates the entire system—frontend, backend, databases, and third-party services—all working together as one cohesive unit, just as a real user would experience it.

Stop wasting engineering hours maintaining brittle Playwright and Cypress scripts. With e2eAgent.io, you just describe test scenarios in plain English, and our AI agent executes the steps in a real browser to verify the outcomes. Get your flawless test suite running in minutes at e2eagent.io.